Infrastructure in laboratories has always been considered as a cost. It’s not a real value itself — it’s part of a result, but it’s not a result itself. The goal is always to carry out an experiment, to produce something, and the laboratory infrastructure is a set of tools.

The better the toolset, the better the outcome, in theory. But the suitable infrastructure for laboratories is very expensive. What are the options to optimize its cost when considering the vision of ‘lab of tomorrow’?

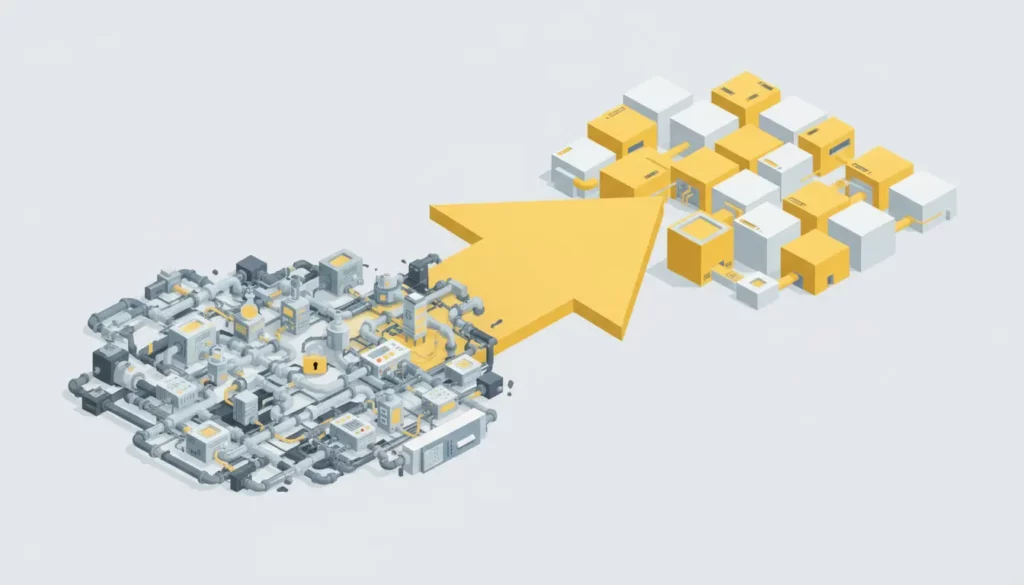

Lab systems today (STATUS QUO).

Multiple fixed configurations of systems are dedicated to different process-related functions (like bioreactor system, filtration system, sampling system, fill & finish system etc.)

After each of these systems is used in a specific phase of the process, it remains unused and waits for the next iteration of the process phase, where it can be used again. Also, the hardware used in one phase of the process cannot be reused in another phase for obvious reasons — it was designed to address only one specific function. Unused equipment is money spent on hardware on the shelf… waiting time costs money.

Different systems often come from different vendors, so it’s not easy to integrate them efficiently to work smoothly in a continuous flow of the process. Vendors control lab setups through a hermetic approach and limited integration options (or very costly ones).

When the process requires parallel processing (i.e. parallel fermentation process in 6–8 vessels), then multiple setups of the same hardware are needed. As a result, the process data is distributed across multiple control units and requires integration to provide an overview and analytics across the results of parallel processing experiments. Of course, parallel processing solutions with centralized control exist, but these are very expensive and often beyond the reach of research labs or startups and smaller companies focused on process development.

Time to train and operate hardware from different vendors is important — when there’s a possibility to integrate different equipment for either re-usability or parallel execution, it will always be complex and affect the learning curve. Complexity equals more time needed to learn.

Lab infrastructure optimization involves tackling a complex set of challenges that directly influence operational efficiency, innovation potential, and cost-effectiveness.

The most pressing issues include:

- lack of reusability of the lab hardware across different functions or phases in the process

- lack of easy and cost-effective integration for parallel processing scenarios

- vendor lock is blocking progress or flexibility to experiment efficiently or with affordability in mind

- fixed configurations and high cost of potential modifications of existing lab systems to support modified configurations (i.e. addition of a pump to the system)

- multiple data sources that require additional integration costs for obtaining the data from parallel processing into one database for centralized reporting and analytics

- complex systems equal long training time

Considering the need to optimize (cost & time) and the situation in today’s laboratory equipment vendors’ portfolio, let’s analyze what could be the cost & time optimization enablers in practice.

Lab of tomorrow with dynamic software-defined lab systems (VISION)

- Laboratory systems are composed of ‘Lego®-like’ hardware modules which can be re-used in different functional setups. Each setup is configured in software to provide specific functions within the process (like bioreactor, filtration system, sampling system etc.)

2. Hardware modules provide the ability to support multiple processes through modularity and flexibility. It’s possible to minimize the amount of hardware needed to execute parallel processes. Connected hardware is dynamically discovered and added to the system.

- Control software provides the flexibility in managing multiple configurations of different systems, and for each of them, it’s possible to define multiple processes with different parameters of experiments… Dynamic process deployment means that P&ID is also dynamically built. No integrator is needed to re-define custom P&ID because of the changes in the process.

4. Standardized software or hardware connectors shorten the time required for the integration and eliminate the need for automation projects to make it happen. The system is not hermetic and is controlled only by the vendor who delivered it.

5. The processing is centralized with data historization and reporting for parallel process execution to speed up reporting and conclusions from experiments, or document centralized audit trail records.

- Out-of-the-box plug & play capabilities combined with the flexible software licensing options of the intuitive control software shorten the learning curve to operate the system efficiently.

All the points above, shaping the vision of the lab of tomorrow, introduce a significant pivot in the approach to experiments or manufacturing requirements:

- Tomorrow, the process defines what hardware is needed to execute process phases or functions, and not the opposite

- Available systems do not limit the scope of specific process functions required for experimentation or manufacturing, because the systems are defined based on the needs of the processes.

- The user (scientist/operator/lab manager) defines what hardware components can be reused between systems and processes to optimize cost.

- Plug & play with dynamic software-defined systems (and processes) drastically reduces time and allows repeatability.

- Centralized processing with non-hermetic integration options allows for cost-efficient integrations with other infrastructure components (LIMS, ELN, DCS, etc.)

Cost & time optimizers summary

- Decrease time spent on hardware integration -> utilize plug & play capabilities

- Reduce cost of hardware setups -> Re-use hardware modules to build systems yourself (no integrator involvement needed)

- Reduce the cost of parallel processing -> use hardware that supports parallel execution and a software platform that centralizes data processing

- Decrease deployment time -> utilize software-defined system with multiple configurations of systems and processes, and dynamic P&ID (no integrator needed to modify P&ID)

- Increase the number of experiments with different options and scenarios -> utilize the flexibility of the hardware modules and control platform to define multiple processes with different parameters

- Reduce time needed to analyze and report from multi-system-multi-process execution -> utilize centralized user management, audit trail, reporting, graphs etc.

Moving to modular and flexible, software-defined lab systems is a big step forward – it improves efficiency & optimizes daily operations. It speeds up experiments, while also opening up exciting new opportunities for innovation and research.